Phone fraud is becoming harder to manage because it no longer relies solely on simple, scripted scams. Many attacks now rely on social engineering, repeated account recovery attempts, refund manipulation, and attempts to exploit rushed phone support processes. National Institute of Standards and Technology’s (NIST) digital identity guidance specifically warns that human-assisted recovery and authentication processes can be vulnerable to social engineering, which is exactly why phone channels remain a meaningful risk surface for customer service and contact center teams.

That is where AI voice agents can help. They do not “detect fraud” by magically knowing intent. They reduce fraud risk by consistently enforcing verification steps, spotting risk signals in real time, slowing down sensitive workflows when needed, and escalating suspicious calls to trained humans with clear context. When deployed well, they make fraud prevention more repeatable without making legitimate customers work harder than necessary.

This guide explains what phone fraud looks like in contact centers, why traditional prevention often fails under pressure, how AI voice agents detect fraud signals, and what best practices help teams protect high-risk call flows safely.

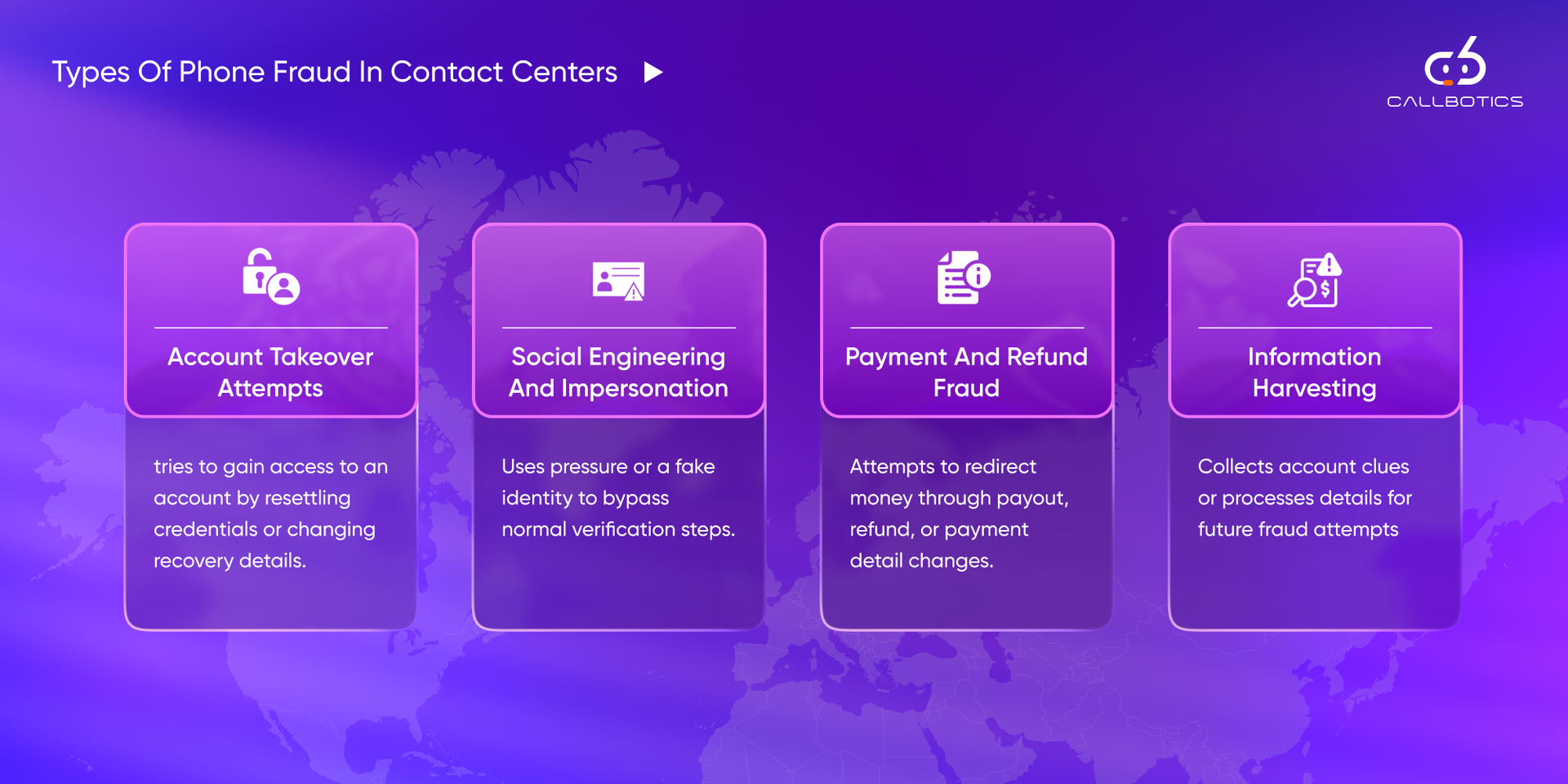

Phone fraud in a contact center usually means a caller is trying to gain access, change something sensitive, move money, or extract information they should not have. The goal is rarely abstract. It is usually tied to account takeover, payment redirection, refund manipulation, or information gathering that makes later fraud easier.

This matters because phone channels are still high-trust environments. A confident caller, a plausible story, and a rushed agent can create the exact conditions fraudsters want. AI voice agents help most when they reduce inconsistency in those moments and make high-risk requests follow stricter rules every time.

Account takeover calls usually involve attempts to reset passwords, change an email address, swap a phone number, or regain access to an account through recovery flows. NIST explicitly notes that human-assisted recovery paths can be vulnerable to social engineering, which makes these interactions especially sensitive.

These calls depend on persuasion rather than technical intrusion. The fraudster may pretend to be the customer, an internal employee, a partner, or someone acting urgently on behalf of an account holder. The goal is to bypass normal controls by creating pressure, confusion, or misplaced trust.

Some callers try to redirect refunds, update payout details, or create conditions for chargebacks and false claims. These are especially risky because they often appear inside otherwise normal service interactions, which makes strong verification and approval logic essential.

Not every fraudulent call asks for a direct transaction. Some are designed to gather process details, customer data, account clues, or verification patterns that can be used in later fraud attempts. These calls are easy to underestimate because they may look like harmless support questions at first.

Explore CallBotics to see how enterprise-ready voice workflows can help protect customer data with stronger verification, controlled access, and safer handling of sensitive call flows.Traditional fraud prevention fails on calls for a simple reason: call center conditions are rarely ideal. Verification scripts are applied inconsistently, queues create pressure, and repeated fraud patterns are often hard to connect across time when each interaction is handled in isolation. NIST’s guidance around identity and recovery makes clear that human-assisted processes can be weaker when social engineering is involved.

Different agents often apply verification differently, especially under load. One agent may follow every step. Another may shortcut the process because the request sounds plausible or the queue is under pressure. That inconsistency is where a lot of phone fraud risk begins.

Rushed environments create weaker controls. When service levels are slipping, there is a natural temptation to move faster, ask fewer follow-up questions, or approve borderline requests too easily. Fraudsters often rely on that urgency.

A suspicious call does not always look suspicious in isolation. The real pattern may only appear when the same request is attempted multiple times, from unusual numbers, at unusual times, or with slightly changing answers. Without connected visibility, those patterns are easy to miss.

AI voice agents detect fraud through signals, not certainty. They work best when they evaluate patterns in identity, behavior, call context, and request type, then apply the right level of verification or escalation. This is closer to risk-based decisioning than to a simple pass-or-fail check.

| Signal Type | What it looks like | Why it matters |

|---|---|---|

| Identity mismatch | Conflicting details, failed questions, inconsistent answers | Suggests the caller may not be who they claim to be |

| Behavior signal | Urgency, pressure, refusal to follow steps, repeated request framing | Often appears in social engineering attempts |

| Context signal | Unusual call time, repeat attempts, and sensitive changes after recent account events | Adds risk even if the caller sounds confident |

| Request-risk signal | Password reset, payout change, refund redirection, address/email/phone changes | Some intents are inherently higher risk |

| Number or device signal | Region mismatch, suspicious number type, repeated risky numbers when available | Supports stronger risk scoring where data is available |

Identity mismatches are one of the clearest fraud indicators. If the caller gives conflicting personal details, fails key verification checks, or changes answers mid-flow, risk should rise. NIST’s identity guidance emphasizes stronger verification logic and risk-aware authentication rather than weak fallback practices.

Fraudsters often create urgency. They may push for exceptions, resist normal steps, repeat the same request aggressively, or try to steer the interaction around controls. These are not proof of fraud on their own, but they are important signals when combined with a sensitive request.

Repeated attempts, unusual timing, recently changed account details, or a pattern of similar calls can all raise risk. This is where AI can help more than a manual workflow, because it can apply the same pattern logic consistently across interactions.

Some intents simply deserve stronger controls. Password resets, payout changes, refund destination changes, and identity field updates should usually trigger more verification than low-risk informational requests.

When number intelligence or related metadata is available, teams can use signals such as region mismatch, number type, or known-risk sources to raise the fraud score. These should support, not replace, the core verification logic.

Detection matters, but prevention matters more. The real value of AI voice agents is not that they “spot fraud” in theory. It is that they can enforce the right workflow every time, add friction only where needed, and send risky calls to the right human path with useful context.

AI voice agents can follow approved verification logic without skipping steps. That consistency is one of the biggest advantages over rushed manual handling, especially on high-risk call intents.

Low-risk requests can follow simpler verification. Higher-risk requests can require stronger checks, more confirmation, or human approval. This is closer to the risk-based model NIST promotes for authentication strength.

If verification fails or risk remains elevated, the system should not complete the action. It can pause the workflow, route for review, or restrict the request until a trained human approves it.

When a risky call is escalated, the handoff should include the request type, what was verified, which signals were triggered, and where the workflow stopped. That reduces the chance of the human reviewer starting from zero.

A fraud-safe system should log what was requested, what was verified, the decision made, and why the call was escalated or blocked. This supports internal review, compliance, and process improvement.

Explore CallBotics to see how voice workflows can enforce verification consistently, route risky calls safely, and create cleaner fraud-review trails across high-risk call intents.The best place to start is not “all fraud.” It is the call intents where the downside of a bad decision is highest. These are usually the flows that affect account access, money movement, or sensitive identity changes.

These are common entry points for account takeover because they can unlock the rest of the account if verification is weak.

Identity changes should usually trigger stronger checks because they alter how future verification and communication work.

Requests to update bank details, payment instruments, or payout destinations are inherently high-risk and should not be taken lightly.

Refund flows are attractive targets because the fraudster is not always trying to steal the account directly. They may simply be trying to redirect money to a new destination.

Unusually high-value changes, cancellations, or order adjustments can also indicate fraud and should trigger stronger validation where appropriate.

Fraud prevention should be robust but not feel chaotic or accusatory to legitimate customers. The best design protects the workflow without turning every protected call into a painful interrogation.

Verification prompts should sound calm and standard, not suspicious. That helps preserve trust for legitimate callers while still enforcing controls.

Single-step verification questions reduce confusion, improve answer quality, and make it easier to detect inconsistencies cleanly.

Before a system commits a high-risk change, it should restate the request and ask for explicit confirmation. This prevents both fraud and genuine mistakes.

Some actions should never proceed without required checks, regardless of how persuasive the caller sounds. That includes the most sensitive changes to identity, access, and payouts.

If the workflow becomes too risky or too uncertain, the caller should move to a human review path that keeps the interaction controlled and calm.

Fraud prevention still has to respect privacy and data-handling rules. NIST’s identity guidance and broader enterprise privacy expectations make it clear that stronger authentication does not mean collecting unlimited data. It means applying the right controls carefully.

Only collect what is necessary for the decision. Avoid asking for or repeating sensitive data unnecessarily, especially out loud on a phone call.

Protected call flows should have strong permissions, retention controls, and auditability to prevent sensitive information from being overexposed.

Some decisions should always be made by trained humans, especially when the request is high-risk, ambiguous, or financially significant.

Fraud prevention should be measured in a way that protects the business without destroying customer experience. That means tracking both risk control and friction.

Track which call types are producing the most flagged attempts so you know where controls are doing useful work.

Too many false positives create customer friction. Too many false negatives create real loss. The balance matters.

A good system should send risky calls to the right team with the right context, not just escalate more often.

Legitimate customers should still be able to pass checks cleanly. If completion rates collapse, the flow may be too difficult.

Track hang-ups, complaints, repeats, and drop-offs on security-sensitive flows so fraud protection does not create unnecessary friction.

CallBotics helps teams build fraud-safe voice workflows by combining structured intent handling, verification logic, risk-aware routing, and operational visibility. Developed by teams with over 18 years of BPO and contact center experience, the platform is built by people who understand how risk grows when queues are busy, workflows are inconsistent, and escalation context is weak.

What makes CallBotics different:

This makes CallBotics especially useful for teams that want strong operational fraud controls without turning the customer experience into a maze.

AI voice agents reduce fraud best when they do three things well: enforce consistent checks, detect risk signals early, and escalate safely when confidence is low. The value is not in replacing judgment entirely. It is in making the risky parts of the workflow more controlled, more visible, and less dependent on rushed human inconsistency.

That is why the strongest phone-fraud strategy is not automation alone. It is automation, verification discipline, risk-aware escalation, and a clean operational review. When deployed well, AI voice agents help protect high-risk call flows while keeping the experience simpler for legitimate customers.

See how enterprises automate calls, reduce handle time, and improve CX with CallBotics.

CallBotics is an enterprise-ready conversational AI platform, built on 18+ years of contact center leadership experience and designed to deliver structured resolution, stronger customer experience, and measurable performance.